What is an SEO Specialist? An SEO Specialist is a digital‑marketing expert who optimises websites and content to rank higher in search engines, attract more organic traffic, and convert that traffic into leads and sales. In today’s hyper‑competitive London market, having a top‑calibre SEO Specialist isn’t a luxury; it’s the difference between being invisible online and dominating your niche.

Hire a top SEO Specialist in London now

SEO Specialist Case Studies

The most successful brands in London and beyond rely on proven SEO strategies that blend technical precision with content that resonates with both users and search algorithms. The following case studies show how modern SEO Specialists have dramatically increased visibility, traffic and revenue in 2024–2026, exactly the kind of results you can expect when you work with an expert rather than a generic agency.

B2B SaaS SEO Growth (London, 2024)

Case study published by a London‑based SEO firm, 2024

A technical SaaS company in London resolved critical technical debt, improved site architecture, and created a focused content strategy. Within months the site doubled its organic traffic and increased qualified pipeline leads by over 150%, proving that a deep‑dive SEO approach delivers measurable ROI.

Legal Services Visibility Surge (UK, 2025)

Case study from an SEO‑focused London–Surrey agency, published March 2025

A regional law firm implemented a content‑driven SEO plan across multiple practice areas. Over six months organic visibility doubled and the site began ranking in the top 10 for high‑value terms such as “probate solicitors London”, directly feeding the firm’s client acquisition pipeline.

Local Service SEO Boom (2026)

Case study shared by a London SEO consultant, published January 2026

A local service business in London implemented a local‑SEO strategy including on‑page optimisation, structured data, and off‑page backlinks. Within 60 days local traffic nearly doubled and qualified leads rose by over 180%, demonstrating how targeted SEO can rapidly transform a small‑business bottom line.

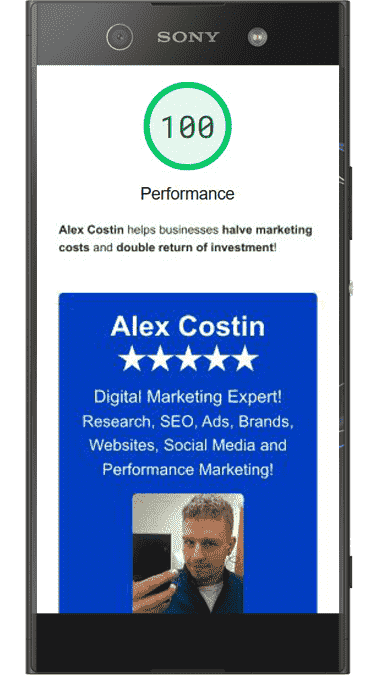

Alex Costin as a SEO Specialist

Alex Costin is a 360º Digital Marketing Expert and SEO Specialist with over 17 years of hands‑on experience designing and executing high‑impact, multilingual SEO campaigns across the EMEA region. His CV at alexcostin.com highlights a track record of turning complex technical challenges into top‑ranking, revenue‑driving websites that consistently outperform agency‑built sites on key metrics such as speed, relevance and conversion.

- Proven global SEO leadership – Alex has led SEO strategy for international brands, delivering technical audits, content ecosystems and on‑page optimisation that push websites into the top positions of highly competitive SERPs, including record‑breaking first‑page rankings in under two weeks.

- Performance‑driven SEM integration – With over 12 years in SEM management, he links SEO with paid search to cut PPC costs by up to 90% and maximise ROI, using data‑driven Quality Scores and landing page optimisation instead of generic templates.

- Technical perfection and PageSpeed excellence – Alex builds and optimises websites on Drupal and Bootstrap, achieving 100/100 PageSpeed scores across Performance, Accessibility, Best Practices and SEO, which search engines and AI overviews increasingly use as trust signals.

- Off‑page authority and backlink networks – Through his own 5000+ blog posts and a partner network of 5000+ additional sites, he creates strategic off‑page backlinks that amplify on‑page SEO, giving clients a link‑profile advantage most UK agencies cannot match.

- AI‑powered workflow efficiency – His expertise in AI allows him to automate content drafts, performance analysis and social‑media planning, freeing up time for strategy and creative thinking while slashing the need for large in‑house teams or external freelancers.

Work directly with a top SEO Specialist in London

For businesses in London, England, working with Alex Costin means accessing an SEO Specialist whose daily rate starts at as little as £1000. With AI‑accelerated workflows and deep technical expertise, one day with Alex can outperform five days of agency work, giving you a faster, leaner path to top‑ranking results.

SEO Specialist Excellence

Landing page quality refers to how well a page fulfils a user’s intent, loads quickly, and is technically sound across speed, accessibility and structure. High‑quality landing pages are not only easier for visitors to read and interact with, but they also send strong positive signals to search engines and AI‑driven search overviews.

When a website consistently delivers high‑quality landing pages, it ranks higher in organic search and gains more visibility in AI‑generated answers and snippets. Search engines reward sites that offer fast, accurate, accessible content, so landing page quality is now a core ranking and visibility factor, not just a “nice‑to‑have”.

To see this excellence in practice, visit the following PageSpeed Insights audits where Alex Costin’s projects score 100/100 across Performance, Accessibility, Best Practices and SEO: alexcostin.com, plato.alexcostin.com, and deceneus.alexcostin.com. In each report, the 100/100 scores demonstrate how technical precision directly translates into better search and AI visibility.

Hire Alex Costin as your business SEO Specialist

Bringing Alex Costin on board as your SEO Specialist creates a powerful, end‑to‑end engine for growth, from market research and technology to AI‑driven content and performance measurement. Below is how each pillar of his work delivers a competitive edge for London businesses.

Market Research

Alex uses both primary and secondary data to uncover hidden market opportunities, customer behaviour patterns and competitor weaknesses. By translating this intelligence into actionable, ROI‑focused strategies, he helps businesses position themselves where competitors are weakest and demand is strongest.

Website Development

A new website built on Drupal and Bootstrap, delivered in as little as two weeks on a fresh server, can easily outperform template‑based WordPress sites used by most local, regional and national competitors. With 100/100 PageSpeed scores, excellent Core Web Vitals and pixel‑perfect UX, these sites gain an instant advantage in both search rankings and visitor retention.

SEO

Deep keyword research combined with maximum landing page quality ensures that every page is built to rank and convert. Off‑page backlinks from Alex’s 5000+ blog posts and his partners’ 5000+ sites create a powerful authority network that very few UK businesses can match, giving clients a durable SEO advantage over time.

Artificial Intelligence

Alex leverages AI at an expert level to create engaging content, automate social‑media campaigns and optimise workflows, which drastically reduces the time and cost of manual repetitive tasks. This approach often eliminates the need to hire additional employees or third‑party agencies just to maintain a constant digital presence.

Performance

Weekly performance reports allow Alex to review campaigns, identify quick wins and bottlenecks, and build a prioritised list of improvements. He then discusses these insights with decision makers, fine‑tunes the strategy and implements changes, creating a continuous cycle of improvement that steadily increases ROI.

SEO Specialist Summary

Using Alex Costin as your SEO Specialist means gaining access to a data‑driven, AI‑enhanced expert who combines technical mastery with creative strategy and real‑world results. From lightning‑fast websites and 100/100 PageSpeed scores to powerful off‑page backlink networks and AI‑powered content, his services are designed to help London businesses outpace, outperform and out‑rank their competitors.

Start your SEO Specialist journey with Alex Costin

Whether you need a full‑stack SEO overhaul, a lightning‑fast new website, or an AI‑driven content and performance programme, Alex Costin is ready to help you dominate search engines and AI‑driven answers. The first step is simple: get in touch.